Token architecture

How I rebuilt a token architecture to reduce ambiguity, align design and engineering, and enable durable theming across many products without forcing a single component library.

Where we started

When I joined the design system effort, tokens existed in name, but not in structure. We had values stored as variables, yet the set was rigid, hard to reason about, and inconsistent between design files and code.

Builders could find individual values, but not the decision model: which tokens were foundational vs. contextual, how values should be composed, or where to introduce new ones safely. That uncertainty created review churn and slowed delivery.

The format we used could store values, but it did not encode hierarchy or intent. Tokens behaved like isolated definitions rather than a coherent system teams could extend with confidence.

Who felt the pain

Engineers felt it immediately, but the impact was cross-functional. Design artifacts and code often used different naming conventions, and (before Figma Variables) styles hid the underlying intent.

When engineers received a file for implementation, they had to infer meaning—mapping visual styles to code tokens through assumption instead of a shared taxonomy. The result was velocity churn, rework, and occasional mismatches that shipped.

In a large organization, that drift compounds. If the foundation is unclear, every new surface becomes another chance for divergence.

The turning point

During our 2023 planning cycle, we made a deliberate decision to pause expansion and invest in foundation work. We needed a system that was easier to scale than a growing pile of exceptions.

Tokens were the lever—but only if they carried structure. The goal wasn’t “more tokens.” It was a shared, enforceable model that could translate design intent into code reliably, support multiple themes and contexts, and stay maintainable over time.

Ownership and authorship

I led the token architecture end-to-end: taxonomy, hierarchy, and naming conventions. I reviewed external models, compared mature systems across industry, and translated those patterns into constraints that matched our environment.

Given the similarities in scale and product variety, I used the CTI model as a starting reference and pressure-tested decisions through working sessions with system stakeholders (design and engineering).

I produced the canonical token map the team aligned around and implemented the resulting variables directly into the design libraries, establishing a shared foundation across design and code.

Design principles

The token system was guided by a few core principles.

- Predictability over exhaustiveness. We focused on a selective set of primitives rather than attempting to encode every possible scenario.

- Flexibility without ambiguity. Tokens needed to communicate intent clearly while supporting multiple themes and contexts.

- Parity across design and code. The same structure, language, and hierarchy had to exist in both environments.

Why we didn’t choose fully semantic tokens

A fully semantic-only model would have been too brittle for our scale. Products varied widely by audience, workflow complexity, device constraints, and interaction needs. A single semantic layer tends to encode product decisions too early, making reuse harder as contexts diverge.

Instead, we adopted a progressive taxonomy that moves from general to specific—so teams can share foundations while still expressing local intent where it matters.

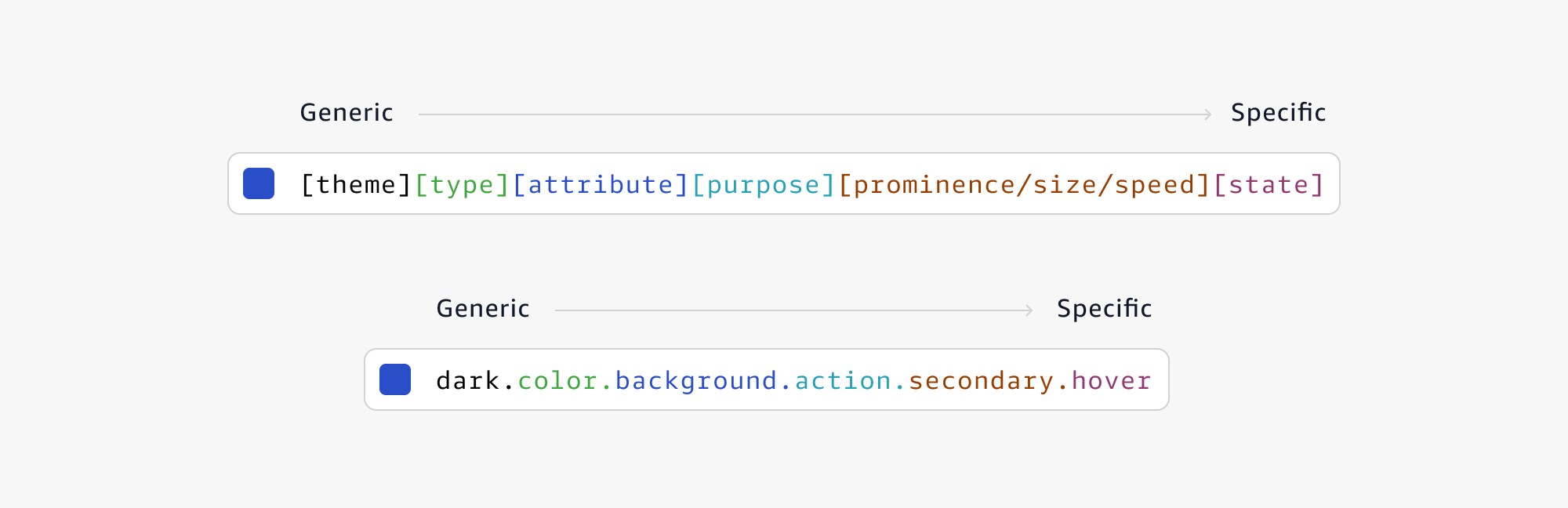

Theme → Type → Attribute → Purpose → Prominence / Size / Speed → State

This structure supports consistent theming and predictable composition while reducing taxonomy debates: teams can see where a value belongs, how it inherits, and what to change without breaking downstream usage.

What changed

The biggest shift was removing interpretation from handoff. With a shared hierarchy between design and code, implementation questions moved from “what does this map to?” to “is this the right decision for the context?”

Teams gained a safer path to extend the system: clearer naming, predictable inheritance, and an explicit place for theme and state. That reduced review churn, improved parity, and made it easier to support multiple experiences without fragmenting the foundations.

Tradeoffs and maturity

The tradeoff was upfront investment: taxonomy alignment, migration planning, education, and ongoing governance to keep naming discipline intact. Structure only works if teams can maintain it.

We made that investment intentionally. The result was a token system that was easier to reason about, safer to extend, and resilient as new products, themes, and constraints were introduced.

Reflection

For me, this was a clear reminder that scaling UI isn’t primarily a visual problem—it’s a clarity problem. A well-structured token model becomes shared language: it reduces ambiguity, improves collaboration, and gives teams a durable way to ship consistent experiences at speed.